coreNet

by Protostar Labs

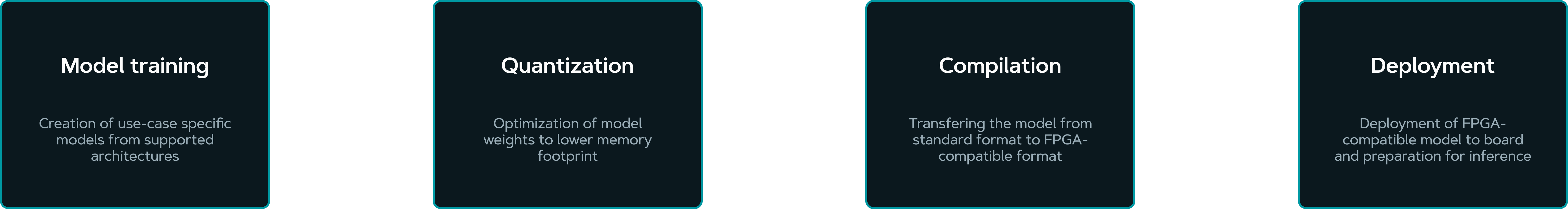

coreNet is a product that enables users to deploy AI models to FPGAs by utilizing a streamlined pipeline from model quantization to inference on FPGAs. The pipeline offers a no-code configuration of selected AI models and automatic deployment to selected FPGA boards.

01 How it works

02 Features

coreNet revolutionizes the deployment of AI models to FPGAs with its robust feature set. It provides a no-code solution, enabling users to configure selected AI models effortlessly without programming knowledge. The end-to-end pipeline ensures a seamless workflow from model quantization to inference on FPGAs. Users benefit from a growing list of supported models and boards, ensuring broad compatibility and flexibility.

No-code solution

Fully configurable deployment process, no expert knowledge of FPGA needed

End-to-end pipeline

Start from scratch by training a model, or port an existing model to FPGA board

Growing list of supported models and boards

Continuously updated list of supported devices and models

Automated model deployment

Automatically converts and optimizes AI models for efficient performance on selected FPGA boards

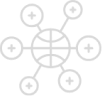

03 Deep Layer Library

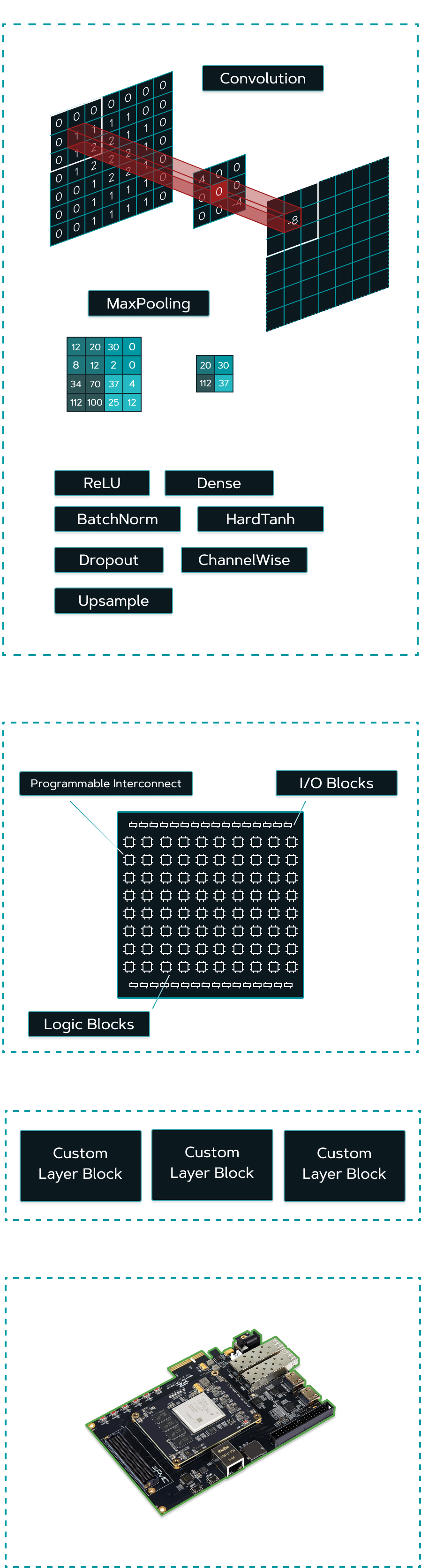

The deep layer library consists of various neural network layers, complex building blocks, and operations implemented as IP cores, ready to be deployed on FPGAs. That combination is created using standard neural network libraries available in Python supported by IP cores written in VHDL or HLS. It is of key importance to properly connect these two processes so that the FPGA performance requirements are met.

The standard neural network layers are similar to their FPGA quantized counterparts and serve the same purpose. Fully connected or dense layers in this Deep Layer Library are implemented with FPGA combinatorial logic in mind, to achieve the best performance and minimum latency. As for the Convolutional layer, it is ported in such a way to optimally utilize the digital signal processing elements which are limited on the FPGA. This allows us to combine powerful base layers and create complex building blocks of newer models.

04 Benefits

coreNet delivers substantial benefits to clients, eliminating the need for expert knowledge and making FPGA technology accessible to a wide audience. It improves performance by leveraging lower power consumption, faster inference, and low latency. The platform ensures that models are production-ready, optimized for integration into production environments.

By simplifying the deployment process, coreNet significantly reduces setup time, streamlining the transition from development to deployment, and enabling users to quickly harness the power of FPGAs.

No expert knowledge required

Ease of use which enables a wide audience of users to benefit from FPGAs

Improved performance

Benefit from lower power consumption, faster inference and low latency

Production ready models

Models are optimized and ready for integration into production environments

Simplified deployment

Reduced setup time and simplified process from development to deployment

05 Device Support

| Supported Boards | |

|---|---|

| PYNQ-Z2 | ZCU102 |

| PYNQ-Z1 | ZCU111 |

| PYNQ-ZU | ZCU208 |

| Kria KV260 | Ultra96V2 |

| ZCU104 | ZUBoard 1CG |

| Supported Models | |

|---|---|

| YOLO v3, v4, v5 | ResNet 34, 50, 18 |

| VGG-net 16 | LeNet5 |